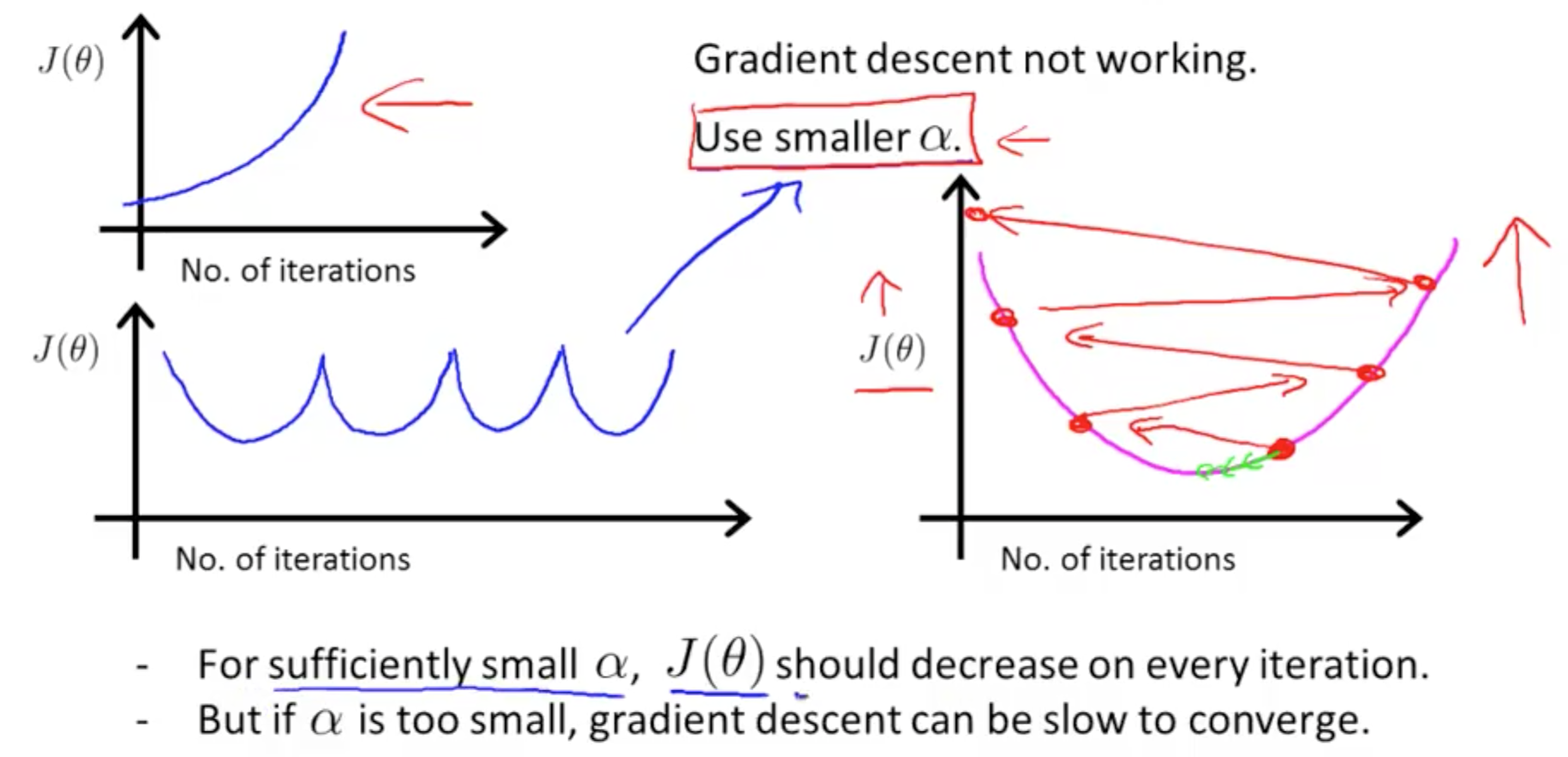

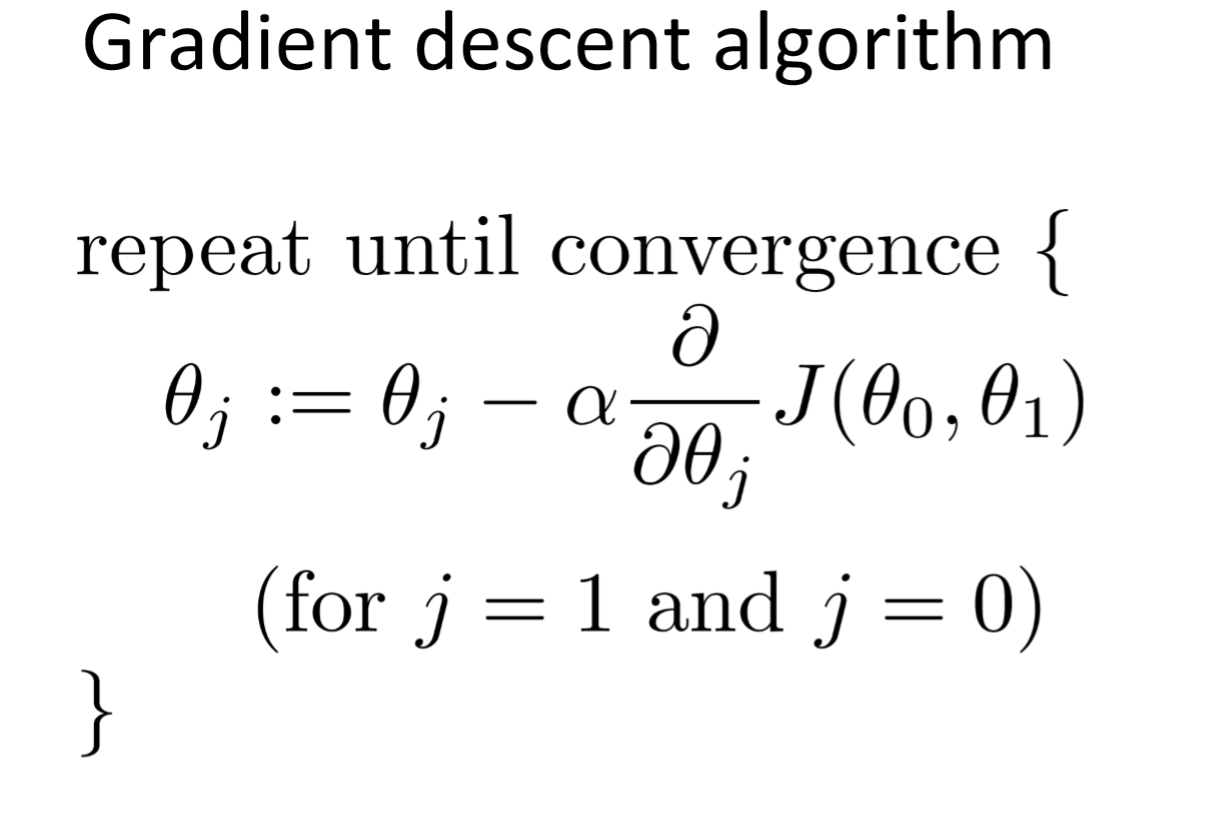

MathType - The #Gradient descent is an iterative optimization #algorithm for finding local minimums of multivariate functions. At each step, the algorithm moves in the inverse direction of the gradient, consequently reducing

Por um escritor misterioso

Last updated 19 janeiro 2025

Linear Regression with Multiple Variables Machine Learning, Deep Learning, and Computer Vision

Optimization Techniques used in Classical Machine Learning ft: Gradient Descent, by Manoj Hegde

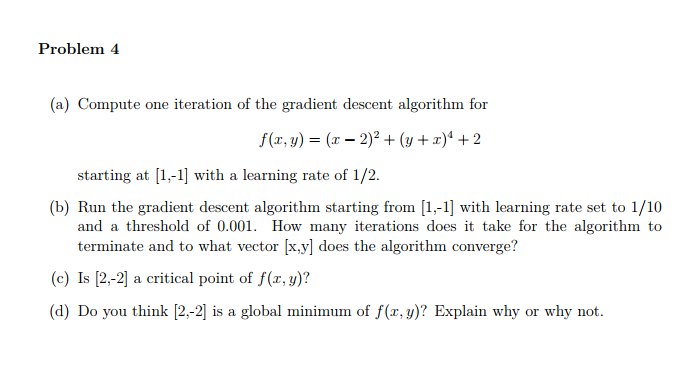

Solved Problem 4 (a) Compute one iteration of the gradient

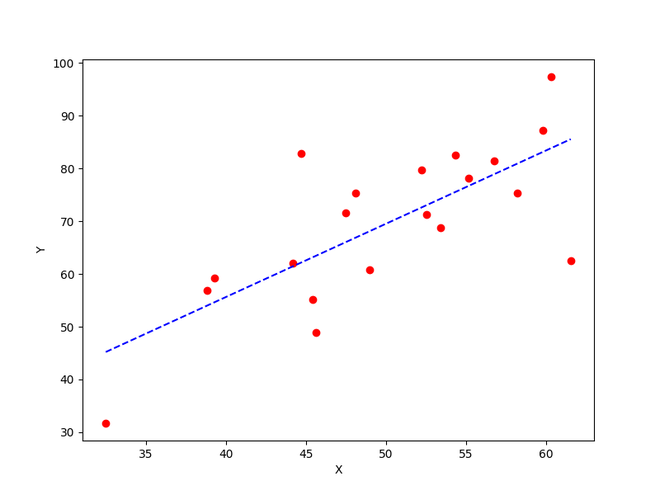

How to implement a gradient descent in Python to find a local minimum ? - GeeksforGeeks

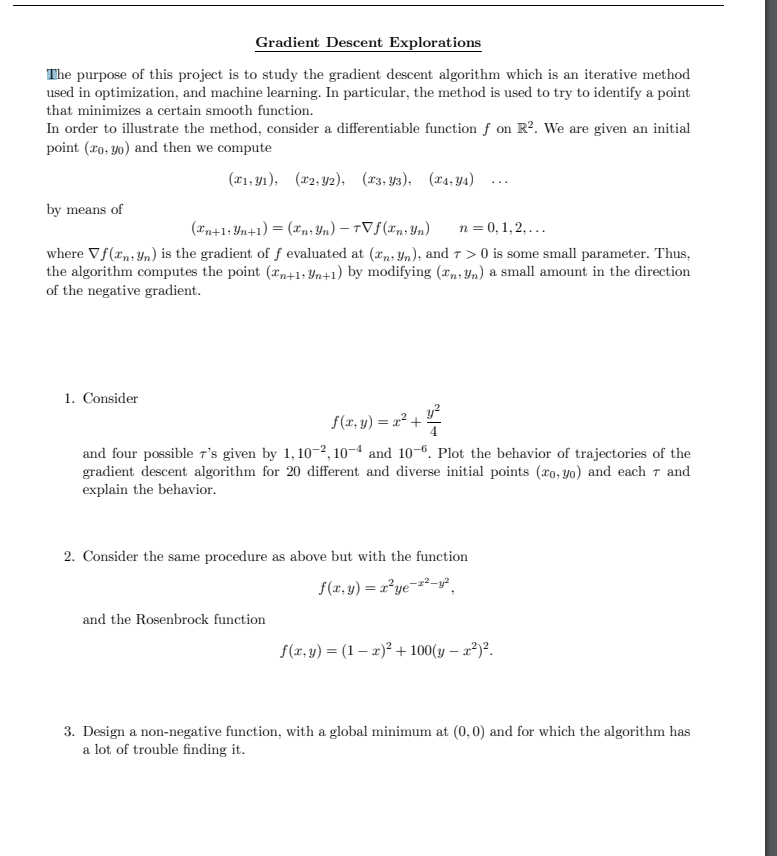

The purpose of this project is to study the gradient

machine learning - Java implementation of multivariate gradient descent - Stack Overflow

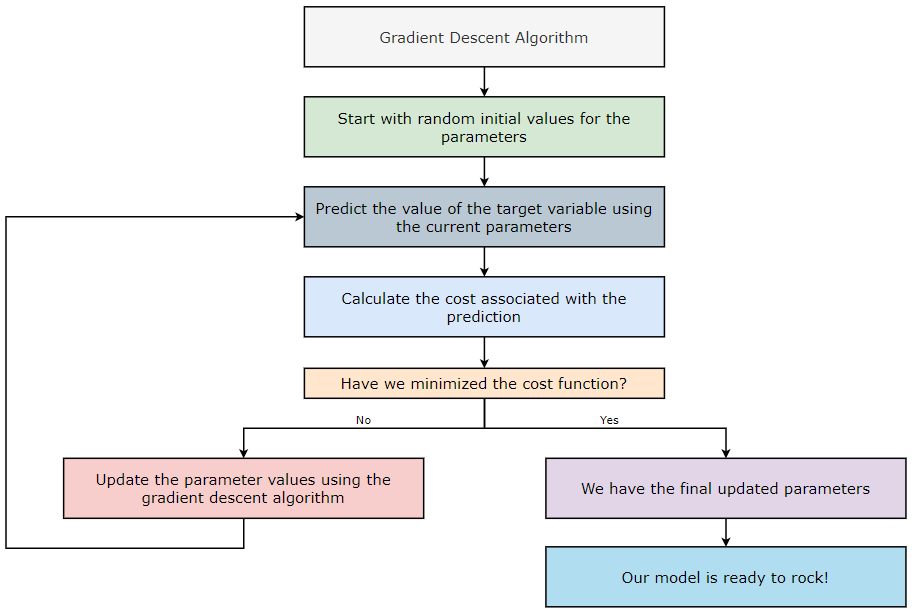

The Gradient Descent Algorithm – Towards AI

Mathematical Intuition behind the Gradient Descent Algorithm – Towards AI

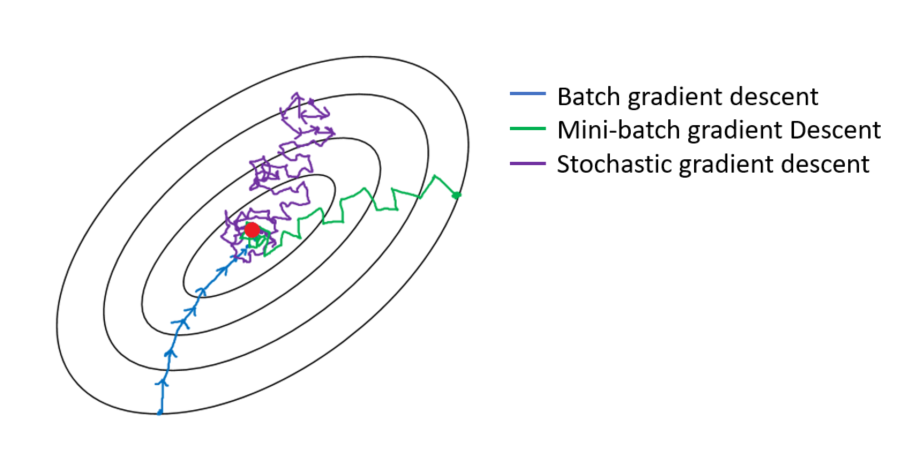

Optimization Techniques used in Classical Machine Learning ft: Gradient Descent, by Manoj Hegde

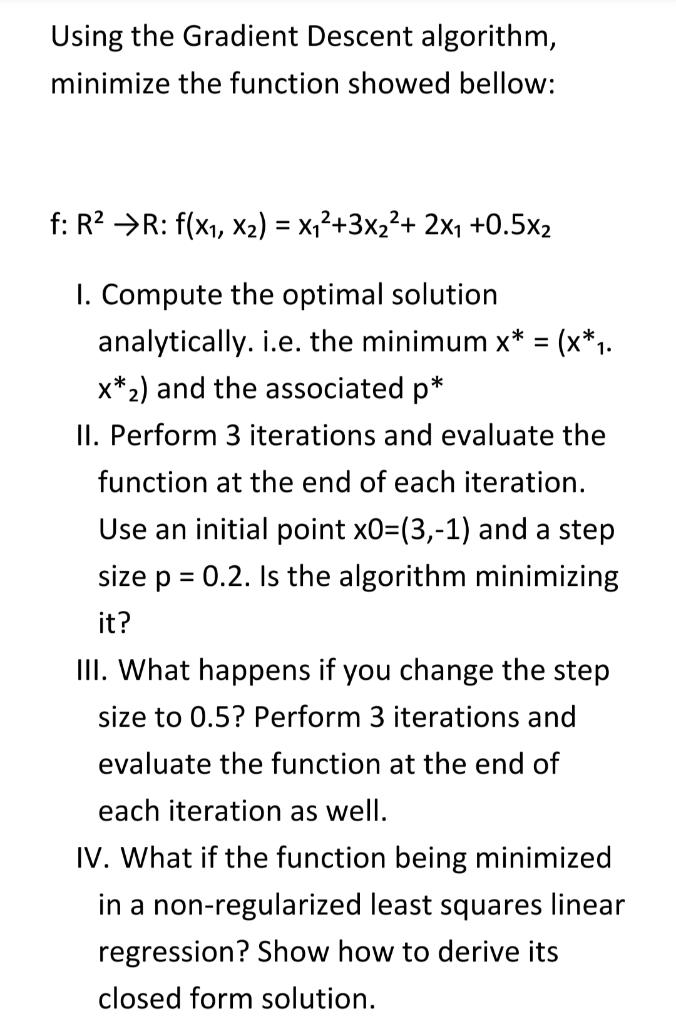

Solved Using the Gradient Descent algorithm, minimize the

Gradient descent optimization algorithm.

Recomendado para você

-

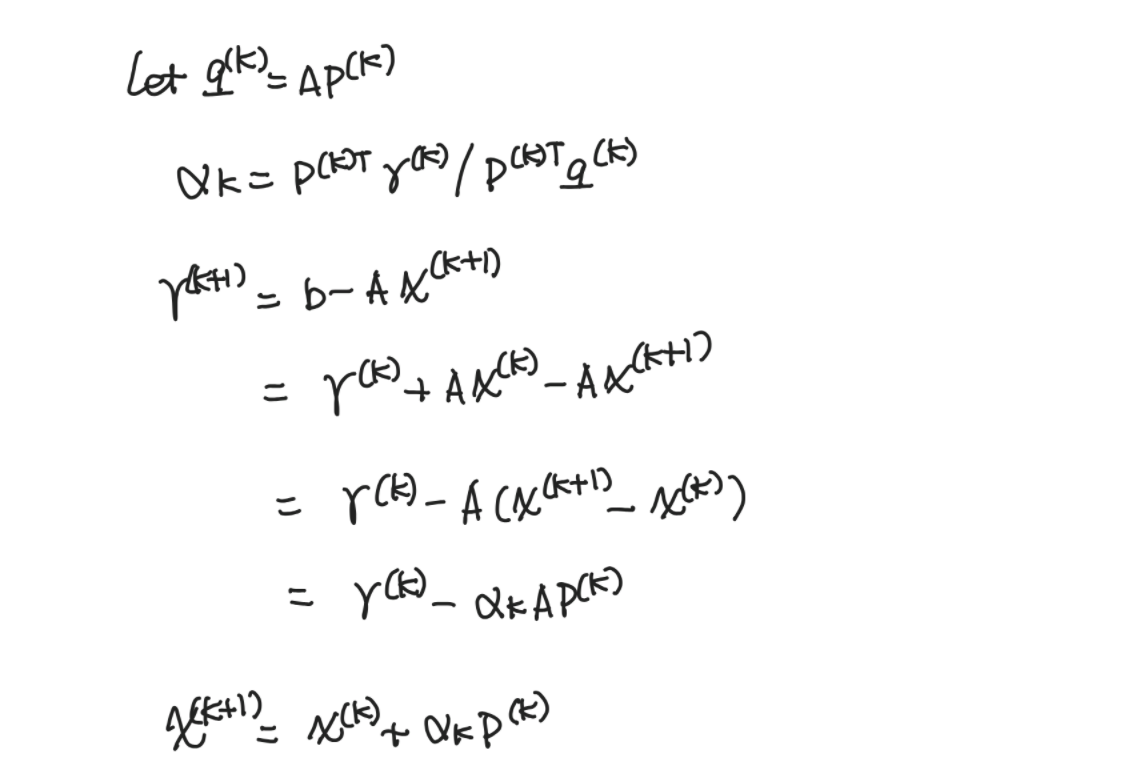

Method of Steepest Descent19 janeiro 2025

Method of Steepest Descent19 janeiro 2025 -

Descent method — Steepest descent and conjugate gradient, by Sophia Yang, Ph.D.19 janeiro 2025

Descent method — Steepest descent and conjugate gradient, by Sophia Yang, Ph.D.19 janeiro 2025 -

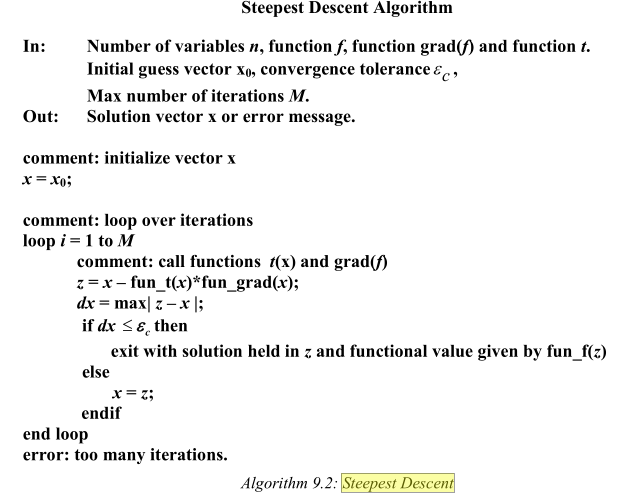

Steepest Descent Method19 janeiro 2025

Steepest Descent Method19 janeiro 2025 -

Steepest Descent Method - an overview19 janeiro 2025

Steepest Descent Method - an overview19 janeiro 2025 -

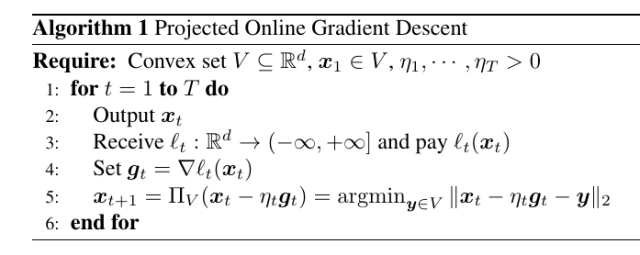

Online Gradient Descent – Parameter-free Learning and Optimization Algorithms19 janeiro 2025

Online Gradient Descent – Parameter-free Learning and Optimization Algorithms19 janeiro 2025 -

Write a MATLAB program for the steepest descent19 janeiro 2025

Write a MATLAB program for the steepest descent19 janeiro 2025 -

Using the Gradient Descent Algorithm in Machine Learning, by Manish Tongia19 janeiro 2025

Using the Gradient Descent Algorithm in Machine Learning, by Manish Tongia19 janeiro 2025 -

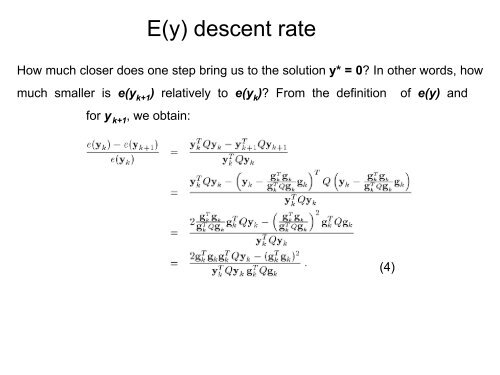

Steepest Descent Rate19 janeiro 2025

Steepest Descent Rate19 janeiro 2025 -

Steepest descent method in sc19 janeiro 2025

Steepest descent method in sc19 janeiro 2025 -

![PDF] Steepest Descent and Conjugate Gradient Methods with Variable Preconditioning](https://d3i71xaburhd42.cloudfront.net/a0174a41c7d682aeb1d7e7fa1fbd2404e037a638/11-Figure8.1-1.png) PDF] Steepest Descent and Conjugate Gradient Methods with Variable Preconditioning19 janeiro 2025

PDF] Steepest Descent and Conjugate Gradient Methods with Variable Preconditioning19 janeiro 2025

você pode gostar

-

Minecraft Pokémon #66: FUNDI DOIS POKÉMON LENDÁRIO! LUNALA19 janeiro 2025

Minecraft Pokémon #66: FUNDI DOIS POKÉMON LENDÁRIO! LUNALA19 janeiro 2025 -

Chelsea só empata com Nottingham Forest e segue sem engrenar no Inglês19 janeiro 2025

-

Camisola Joma RSC Anderlecht Primera Equipación 2022-2023 Roxo - Fútbol Emotion19 janeiro 2025

Camisola Joma RSC Anderlecht Primera Equipación 2022-2023 Roxo - Fútbol Emotion19 janeiro 2025 -

Notícia19 janeiro 2025

Notícia19 janeiro 2025 -

Mortal Kombat 1's next DLC Kameo Fighter Khameleon now has a19 janeiro 2025

Mortal Kombat 1's next DLC Kameo Fighter Khameleon now has a19 janeiro 2025 -

o batidão e o gritinho do tae #nct #nct127 #factcheck #taeyong19 janeiro 2025

-

Pack de Memes on X: O pack de memes da Jojo Todynho acabou de ser postado la na nossa pagina do facebook e vai ser atualizado semanalmente / X19 janeiro 2025

Pack de Memes on X: O pack de memes da Jojo Todynho acabou de ser postado la na nossa pagina do facebook e vai ser atualizado semanalmente / X19 janeiro 2025 -

Ghost of Tsushima PS4 dymanic themes now available to download19 janeiro 2025

Ghost of Tsushima PS4 dymanic themes now available to download19 janeiro 2025 -

crazy funny cat pfp - Roblox19 janeiro 2025

-

BORUTO NEXT GENERATION - MANGÁ PANINI - AnimeFã Store19 janeiro 2025

BORUTO NEXT GENERATION - MANGÁ PANINI - AnimeFã Store19 janeiro 2025